Listen to this article

Abstract

We just introduced a new feature called automated column statistics in Firebolt. Automated column statistics provide the query optimizer with up-to-date information on statistical properties of the values in a column. These statistics can help to give better plans and ultimately achieve better performance without having to modify query texts. Once enabled, the statistics are collected transparently and kept up to date incrementally during table updates. In this blog post, we explain how to use the new feature by using a simple example query and then go into detail on how automated column statistics work under the hood.

Background: Join Ordering

In the example query in this blog post, we mainly look at how to improve join performance using automated column statistics. To join two tables, Firebolt builds a hash table of one of the two inputs, with the hash key being the join key. It then streams through the other input, probes the hash table with each key, and optionally outputs the results. In order to keep the hash table fast and in-memory, the query optimizer tries to make sure that we build it from the smaller input. If it chooses the wrong ordering, the hash table might not even fit into the RAM of the engine and needs to be spilled to the SSD. Join ordering has a major influence on the performance of SQL queries. For deciding on the join ordering, the optimizer tries to estimate the cardinality of the two inputs. These inputs can potentially be filtered, aggregated or are the output of another join. Therefore, without knowing the distribution of the data, it is hard for the optimizer to give good estimates even when the input is a table with a simple filter on top. To illustrate this, consider the following example.

Example

Let’s say that we want to perform analytical queries for a video streaming platform. Our fictional platform has 100 million subscribers (Netflix has about 277 million) and has a catalog of 100’000 titles (for some countries, Netflix has 10k). So our database schema could be as follows.

CREATE TABLE movies (title TEXT, duration INT); -- 100'000 rows

CREATE TABLE views (movie_title TEXT, customer_name TEXT, device_type TEXT); -- 5 billion rows

The column device_type has 4 distinct values: TV, Phone, PC, and Other. The customer_name column has 100 million distinct values due to the same number of customers. The movie_title column matches one of the titles in the movies table, so it has 100’000 distinct values. We are now interested in the following two queries:

Query 1: SELECT avg(duration) FROM views JOIN movies ON views.movie_title = movies.title WHERE views.device_type = 'TV';

Query 2: SELECT avg(duration) FROM views JOIN movies ON views.movie_title = movies.title WHERE views.customer_name = 'Alice';

In query 1, we want to know the average duration of movies watched on a TV screen. In query 2, we might want to build a customer-facing dashboard showing statistics about the movie watching behavior of individual customers. In this example, we look at the average duration of shows that Alice watches. Note that both queries follow the exact same syntactic structure, they just filter based on different columns. As a human, it is easy to guess that the selectivities of those two filters are vastly different: A decent percentage of all users likely watches their shows on a TV, so the number of rows left after the filter is likely still very large. In contrast, customer Alice likely only watched a couple of movies, and certainly not the entire movie library. Therefore, we have two syntactically equivalent queries where we still expect widely different cardinalities of intermediate results.

So what does Firebolt’s query planner do? Without more information, it cannot do better than guessing. Firebolt does this by using a basic textbook estimation (inverse square root) for selectivity of the filter. We end up with the following plan structure for both of the queries. In Firebolt’s explain output, the probe side of the join is printed first, and then the build side below. The optimizer puts the views table on the build side because it assumes that the filter is very selective and removes most rows.

QUERY PLAN TEXT

[0] [Projection] avg_0

\_[1] [Aggregate] GroupBy: [] Aggregates: [avg_0: avg(movies.duration)]

| [Types]: avg_0: double precision null

\_[2] [Projection] movies.duration

\_[3] [Join] Mode: Inner [(movies.title = views.movie_title)]

\_[4] [StoredTable] Name: "movies"

| [Types]: movies.title: text null, movies.duration: integer null

\_[5] [Projection] views.movie_title

\_[6] [Filter] (views.device_type = 'TV')

\_[7] [StoredTable] Name: "views"

[Types]: views.movie_title: text null, views.device_type: text null

For query 2, the filter for Alice is indeed very selective. After it, not many views are left, and putting the filtered views table on the build side is an optimal plan. For query 1, however, this is a bad plan. The filter by TV lets a significant number of rows pass through. We end up putting the large views table on the build side of the join. This hash table might end up being larger than the available RAM in the engine, forcing it to spill to the SSD. Because the optimizer does not know the semantics of those columns, we need to give it a better idea of the distribution of values in the columns.

How To Guide The Optimizer

In Firebolt, there are several ways to guide the optimizer. If you need absolute control, you can enable the user-guided optimizer mode. In this mode, the optimizer does not perform join ordering, aggregate push-down, or redundant join removal. Only the user is responsible for writing efficient queries. This is useful for highly tuned heavy-duty queries.

The second option is to use the no_join_ordering hint. With this hint, the optimizer performs as its optimizations but skips the join ordering. The user is responsible for writing the joins in an efficient way.

However, those two options assume that the query texts can be changed and that users know how to tune them. Especially in the case of queries generated by an LLM, one cannot rely on them being well tuned. Additionally, for large queries, it might not even be feasible for a human to properly tune the join order. Firebolt now offers two additional ways to aid the optimizer: automated column statistics and history based statistics! In this blog post, we focus on automated column statistics, while a future blog post will go into detail about history based statistics.

Automated Column Statistics

Coming back to our example of the video streaming platform, how can we improve the join ordering? With automated column statistics, this is now extremely easy. Just run ”alter table … add statistics” and you are ready to go. In our example above, this could look like the following.

ALTER TABLE views ADD STATISTICS (device_type) TYPE ndistinct;

ALTER TABLE views ADD STATISTICS (customer_name) TYPE ndistinct;

Before we dive into the details of what this does under the hood, let us look at the effect that this has on the join order. As mentioned above, for query 2 (filtering by customer_name), the plan is already optimal. It does not change when enabling automated column statistics. However, for query 1 (filtering by device_type), we now get the following changed query plan. The optimizer now knows that filtering for views made on a TV reduces the cardinality, but not as much as it initially assumed when it had no data on the distribution of values in the column. As you can see in the following explain output, the large views table is now on the probe side (top) of the join.

[0] [Projection] avg_0

\_[1] [Aggregate] GroupBy: [] Aggregates: [avg_0: avg(movies.duration)]

| [Types]: avg_0: double precision null

\_[2] [Projection] movies.duration

\_[3] [Join] Mode: Inner [(views.movie_title = movies.title)]

\_[4] [Projection] views.movie_title

| \_[5] [Filter] (views.device_type = 'TV')

| \_[6] [StoredTable] Name: "views"

| [Types]: views.movie_title: text null, views.device_type: text null

\_[7] [StoredTable] Name: "movies"

[Types]: movies.title: text null, movies.duration: integer null

This switched join order has a massive effect on the query performance. We performed a short experiment on a single-node engine, with result caching disabled. In query 2 (filtering by customer_name), the plan already was optimal, and we do not see a change in the query time. This shows that the inference of automated column statistics does not slow down the query planning process. However, for query 1 (filtering by device_type) we get massively improved performance due to the swapped join order. Note that this experiment was conducted on a developer workstation with an i9-14900K processor and not on our cloud offering. Nevertheless, Firebolt conveniently handles the 5 billion row views table in just a couple of seconds.

With just these two simple alter table statements, we massively improved the performance of our example query. Additionally, all future queries can profit from the additional statistics – no changes to the query texts are required. Firebolt keeps the statistics up to date transparently and transactionally consistent.

Statistics Collection

In the following sections, we look under the hood of automated column statistics. We look at how Firebolt collects and reads the statistics that are so easy to add to a table. We also look at the query plans and cardinality estimates in more detail to understand the choices that our optimizer makes during query planning.

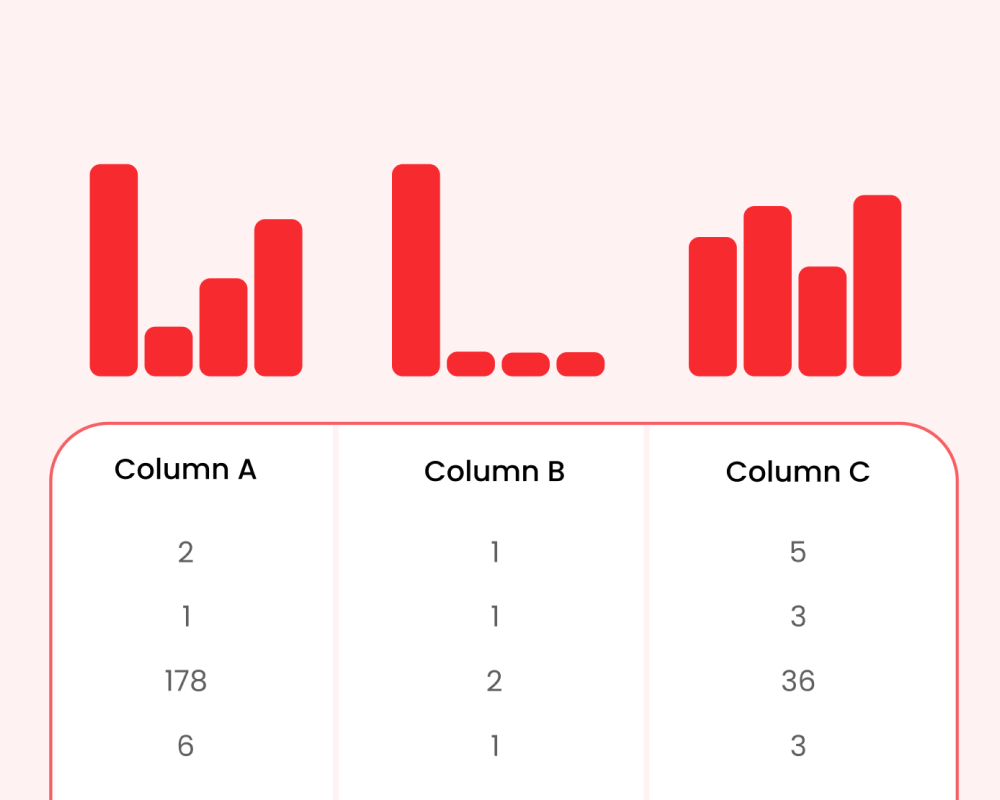

Automated column statistics are built on top of Firebolt’s powerful aggregating indexes. These indexes can pre-compute arbitrary aggregations on top of your tables and keep them up to date transparently during inserts and updates. Our aggregating indexes are transactionally consistent across all engines, so automated column statistics inherit this property. If you modify a table to collect automated column statistics, Firebolt automatically creates a system-managed aggregating index for maintaining the statistics. By default, it collects the distinct count of a column. However, you can specify other statistics you want to collect. You can spot these aggregating indexes in the show indexes command, indicated by the created_by=system column:

show indexes;

index_name | table_name | type | created_by | expression

----------------+----------------+-------------+-------------+-------------------------------------------------------

views__STATS | views | aggregating | SYSTEM | [counting_hll_count_distinct("device_type"),count(*)]

views__STATS_1 | views | aggregating | SYSTEM | [counting_hll_count_distinct("customer"),count(*)]

As you can see, the index uses the counting_hll_count_distinct aggregate function. The function provides an approximate distinct count but in contrast to the hll_count_distinct function, it supports deletions. We built this aggregate function specifically for automated column statistics, but it is useful for all aggregating indexes when there are frequent deletions. We now look at the differences between them.

HyperLogLog Sketches. Our approximate distinct counts use a simple and elegant probabilistic data structure called HyperLogLog (HLL) sketches. It builds on the fact that hash values of a good hash function can be considered random. They then have a probability of 2^-k to have the leading k bits set to 0. In other words, if we hash distinct keys, a share of 2^-k is expected to have k leading 0 bits. Conversely, if we hash all keys in a set and find a maximum of k leading 0-bits, we can approximate the number of distinct input keys by 2^k. In practice, it is more stable to hash each key to one of multiple buckets (using the first bits of the hash value) and counting the maximum leading zeroes (of the remaining hash) for each bucket individually. Finally, the maximum stored in all buckets can then be combined for example using the harmonic mean. Because we only need to store very small counters storing numbers smaller than 64 per bucket, this is very space efficient and fast to evaluate.

Counting HyperLogLog Sketches. A disadvantage of HLL sketches is that their aggregate state does not support deletions. Removing a value that has the same number of leading 0-bits as its bucket does not mean that no other values had the same hash. Therefore, if we used classical HLL sketches in an aggregating index, after each deletion we would need a full table scan to keep the index up to date. A solution for this problem are counting HLL sketches, introduced in 1985. Instead of just remembering the maximum leading zeroes of hash values, counting sketches keep counters of how often it saw each number of leading zeroes. To avoid this increasing the space consumption of aggregate states significantly, we use an enhancement presented at the CIDR 2019 conference. The idea is to count the first 128 values exactly. If the counter passes this value, it continues counting the logarithm of stored keys and updates the value probabilistically. This way, we only need one byte for each counter. While this still increases the space consumption compared to basic HLL sketches, it enables fast deletions without additional table scans. In order to make automated column statistics work well with deletions, we automatically create counting HLL sketches when adding statistics collection to a table.

Statistics Inference

To make the statistics available to the Firebolt optimizer, we extract all tables that a query references and detect whether they have automated column statistics enabled. If they have, we internally run a simple “select counting_hll_count_distinct(column) from table” query. Because we know that an aggregating index with this function exists, we can rely on our automatic aggregating index matching to efficiently calculate the result without a full table scan. By confirming the size of the aggregating index from our metadata, we ensure to only run inference if it will be fast, given that this adds to the planning time. Additionally, we cache the results locally on each node. In our experiments, the statistics inference usually takes less than 20 ms when not cached. At the same time, they can potentially reduce the overall query time massively, for example due to better join ordering.

Reading User-Created Indexes. In addition to creating the aggregating indexes under the hood through “alter table … add statistics”, we can also infer statistics from existing user-created aggregating indexes. To read those during query planning, enable the corresponding query-level setting by running “set infer_statistics_from_indexes=true”. On top of indexes directly using an aggregation that counts distinct values, we can infer distinct counts from additional indexes. For example, from an aggregating index defined as “on views (device_type, count(*))”, we can derive the number of distinct device_type values from the number of groups in the index. Reading user-created aggregating indexes can also help to assess the effect of enabling automated column statistics for the entire table without modifying the table definition.

Cardinality Estimation in Firebolt

If you want to understand the reasoning behind decisions taken by the optimizer, you can use explain(statistics). Let us first look at a query plan when no automated column statistics are available. The optimizer knows the number of rows in each input table, but all other numbers are estimates. In our example queries (this is the same for query 1 and 2), the selectivity of the filter is most interesting. Here, the planner uses a textbook estimate for the filter selectivity: 1/sqrt(input rows). This means we choose the filtered views table as the build side (bottom input) of the join and the movies table as the probe side (top input). For query 2, this is optimal, but for query 1, it is not.

QUERY PLAN TEXT

[0] [Projection] avg_0

| [Logical Profile]: [est. #rows=1, column profiles={[avg_0: #distinct=1]}, source: estimated]

\_[1] [Aggregate] GroupBy: [] Aggregates: [avg_0: avg(movies.duration)]

| [Types]: avg_0: double precision null

| [Logical Profile]: [est. #rows=1, column profiles={[avg_0: #distinct=1]}, source: estimated]

\_[2] [Projection] movies.duration

| [Logical Profile]: [est. #rows=6.48341e+07, source: estimated]

\_[3] [Join] Mode: Inner [(movies.title = views.movie_title)]

| [Logical Profile]: [est. #rows=6.48341e+07, source: estimated]

\_[4] [StoredTable] Name: "movies"

| [Types]: movies.title: text null, movies.duration: integer null

| [Logical Profile]: [est. #rows=100000, source: metadata]

\_[5] [Projection] views.movie_title

| [Logical Profile]: [est. #rows=70711, source: estimated]

\_[6] [Filter] (views.device_type = 'TV')

| [Logical Profile]: [est. #rows=70711, column profiles={[views.device_type: #distinct=1]}, source: estimated]

\_[7] [StoredTable] Name: "views"

[Types]: views.movie_title: text null, views.device_type: text null

[Logical Profile]: [est. #rows=5e+09, source: metadata]

After altering the table to add ndistinct statistics to both columns, we now get the following plan for the query views.customer_name = ‘Alice’. The order that was guessed above is already optimal for this query. As you can see, our optimizer detects that and keeps the join order. In the explain(statistics) output, you can see that the optimizer now has the distinct count available. We assume uniform distribution across the distinct values, so our estimated number of rows after the filter is 50. This aligns well with our example: while in reality there will be some difference in viewing behavior between customers, we do not expect that individual customers watch multiple orders of magnitude more than other customers. In the future, we plan to support detecting non-uniform distributions, see later section on future steps.

QUERY PLAN TEXT

[0] [Projection] avg_0

| [Logical Profile]: [est. #rows=1, column profiles={[avg_0: #distinct=1]}, source: estimated]

\_[1] [Aggregate] GroupBy: [] Aggregates: [avg_0: avg(movies.duration)]

| [Types]: avg_0: double precision null

| [Logical Profile]: [est. #rows=1, column profiles={[avg_0: #distinct=1]}, source: estimated]

\_[2] [Projection] movies.duration

| [Logical Profile]: [est. #rows=67235, source: estimated]

\_[3] [Join] Mode: Inner [(movies.title = views.movie_title)]

| [Logical Profile]: [est. #rows=67235, source: estimated]

\_[4] [StoredTable] Name: "movies"

| [Types]: movies.title: text null, movies.duration: integer null

| [Logical Profile]: [est. #rows=100000, source: history]

\_[5] [Projection] views.movie_title

| [Logical Profile]: [est. #rows=50, source: estimated]

\_[6] [Filter] (views.customer = 'Alice')

| [Logical Profile]: [est. #rows=50, column profiles={[views.customer: #distinct=1]}, source: estimated]

\_[7] [StoredTable] Name: "views"

[Types]: views.movie_title: text null, views.customer: text null

[Logical Profile]: [est. #rows=5e+09, column profiles={[views.customer: #distinct=1.00193e+08]}, source: automated column statistics]

The situation is more interesting for the second query with the filter views.device_type = 'TV'. With statistics, we now get a swapped join order there, making the plan significantly faster to execute. Still assuming uniform distribution of the values, we now estimate 1.25e9 rows after the filter, making it much larger than the number of movies. We again assume that all distinct values appear equally often. This is of course a crude approximation, but it is the best that any query planner can do when no further statistics are available. As mentioned above, we plan to add histogram sketches in the future to capture any bias in the value distribution.

QUERY PLAN TEXT

[0] [Projection] avg_0

| [Logical Profile]: [est. #rows=1, column profiles={[avg_0: #distinct=1]}, source: estimated]

\_[1] [Aggregate] GroupBy: [] Aggregates: [avg_0: avg(movies.duration)]

| [Types]: avg_0: double precision null

| [Logical Profile]: [est. #rows=1, column profiles={[avg_0: #distinct=1]}, source: estimated]

\_[2] [Projection] movies.duration

| [Logical Profile]: [est. #rows=8.00787e+11, source: estimated]

\_[3] [Join] Mode: Inner [(views.movie_title = movies.title)]

| [Logical Profile]: [est. #rows=8.00787e+11, source: estimated]

\_[4] [Projection] views.movie_title

| | [Logical Profile]: [est. #rows=1.25e+09, source: estimated]

| \_[5] [Filter] (views.device_type = 'TV')

| | [Logical Profile]: [est. #rows=1.25e+09, column profiles={[views.device_type: #distinct=1]}, source: estimated]

| \_[6] [StoredTable] Name: "views"

| [Types]: views.movie_title: text null, views.device_type: text null

| [Logical Profile]: [est. #rows=5e+09, column profiles={[views.device_type: #distinct=4]}, source: automated column statistics]

\_[7] [StoredTable] Name: "movies"

[Types]: movies.title: text null, movies.duration: integer null

[Logical Profile]: [est. #rows=100000, source: history]

As you can see in the examples, automated column statistics give the planner better insight into the data distribution. This can lead to much better plans and better performance. These lead to better performance without having to manually tweak your queries.

Future Steps

Histogram Sketches

Fundamentally, query optimizers cannot know all properties of the input data without running the query. Therefore, they always have to rely on estimates. Currently, if the Firebolt optimizer knows the distinct count of a column, it assumes that the values are uniformly distributed. If we do not have more data, this is a sensible assumption. However, looking at our example queries above, the number of users watching movies might be skewed: We might have more users who watch their movies on their TV than on the phone. In the future, we plan to support histogram sketches of the data, which can reflect a possible bias in the distribution of column values.

History-Based Statistics

A future blog post will cover history-based statistics. By recording example queries, the optimizer can then learn cardinalities and base its estimates on them.

Apache Iceberg Tables

Apache Iceberg is an open table format that gains traction because of its wide adoption in different systems. Due to the Iceberg format, one can freely choose a query engine without having to worry about data migration. The Iceberg format supports specifying statistics about the stored data, including distinct counts. However, whether these are available depends on the writer. Like for many other writer improvements covered previously on our blog, one cannot rely on the writer providing these.

Here at Firebolt, we embrace the Iceberg format and handle it as a first-class citizen in our database engine. We will soon release aggregating indexes on Iceberg tables. With this, Firebolt manages the indexes for your existing Iceberg catalog. This means that we also immediately get support for automated column statistics. In the near future, you will therefore be able to enable automatic statistics collection for Iceberg tables, and profit from better statistics in the optimizer, eventually leading to more informed decisions and better plans.

Conclusion

Firebolt can now transparently collect and access statistics about your data, built on top of aggregating indexes. These statistics help the optimizer to make more informed decisions, which can lead to better query plans and therefore significant performance improvements for your queries. Automated column statistics are available now, and the feature will soon be compatible with Apache Iceberg tables. Get started with automated column statistics now!